Artificial Intelligence Seminar

Haptic Shared Control and Adjustable Autonomy for Physical Human-Robot Interaction

-

Wed 20 Jun 18

16:00 - 18:00

-

Colchester Campus

1N1.4.1

-

Event speaker

Dr Ayse Kucukyilmaz from the University of Lincoln

-

Event type

Lectures, talks and seminars

Artificial Intelligence -

Event organiser

Computer Science and Electronic Engineering, School of

-

Contact details

Dr Ayse Kucukyilmaz is a lecturer in Computer Science at the University of Lincoln, and a member of the Lincoln Centre for Autonomous Systems (L-CAS). Her research interests include haptics, physical human-robot interaction and machine learning applications in adaptive autonomous systems. Before joining the University of Lincoln, Dr Kucukyilmaz worked as an Assistant Professor at the Department of Computer Engineering at Yeditepe University, Istanbul, Turkey from 2015 to 2017. Between 2013 and 2015 she worked as a postdoctoral research associate at Imperial College London on the EU FP7 ALIZ-E project. She received her Ph.D. in Computational Sciences and Engineering from Koc University in 2013 and B.Sc. and M.Sc. degrees in Computer Engineering from Bilkent University in 2004 and 2007. She received the Academic Excellence Award at her graduation from Koc University in 2013 and has received fellowships from TUBITAK and EC Marie Curie Actions.

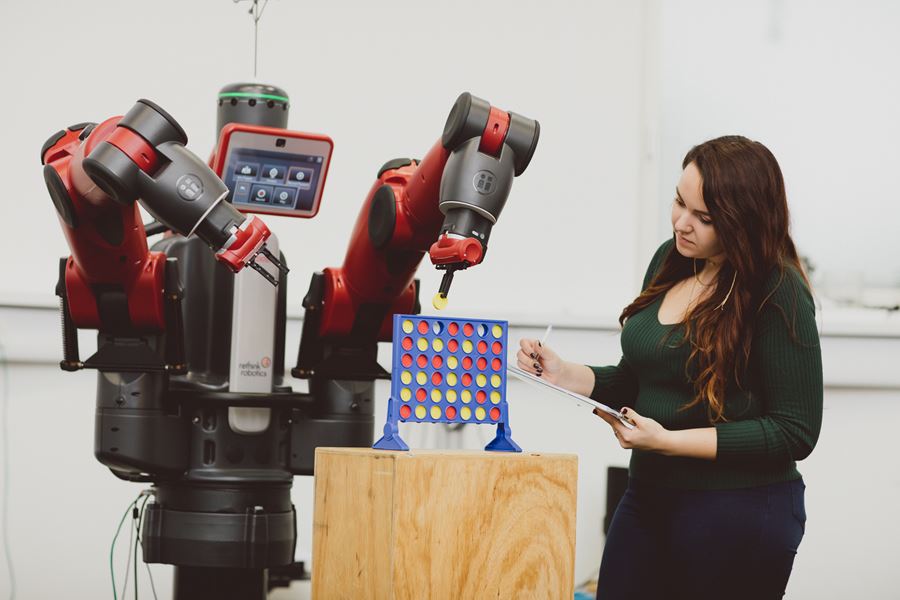

In this talk, I will present an overview of my research to enable haptics-based adaptation techniques toward building robots that work in close contact with humans. In the first part of my talk, I will focus on the idea that the communication between a human and a robot would benefit from a decision making process, where the robot dynamically adjusts its control level during the task based on the intentions of the human. I will discuss the benefits of using a force-triggered dynamic role allocation mechanism for human-robot systems to negotiate through force information to switch between leader and follower roles.

In the second part, I will discuss how to distinguish between interaction patterns that we encountered during a haptics-enabled human-human cooperation scenario. I will talk about a novel interaction taxonomy, and present the usefulness of force-related information to describe interaction characteristics.

Finally, I will present a shared control methodology that adopts a user modelling approach to model the assistant’s behavior when aiding a wheelchair user when driving. A triadic learning-assistance-by- demonstration (LAbD) technique, in which an assistive robot(i.e. the powered wheelchair) models its assistance function by observing the demonstrations given by a remote human assistant will be discussed. The talk will end with a short discussion of some future research directions.